`ch(n)` is an arbitrary fixed child of `n` (let’s call it the “left” child) Let’s talk about the rest of what’s going on in that beast: Our two inner nodes `n(1,1)` and `n(1,2)` describe the walk from root to “wood.” The leaf node at the end of this walk is `n(1,3)`. Remember that word 1 is “wood” (see above). Using our “wood chuck” example, let’s look at `n(1,j)`. This means we’ll have slightly different `L(w)` values for each word: `n(w,j)` describes the j-th node on the path from the root to w Okay… this obviously needs some explanation.

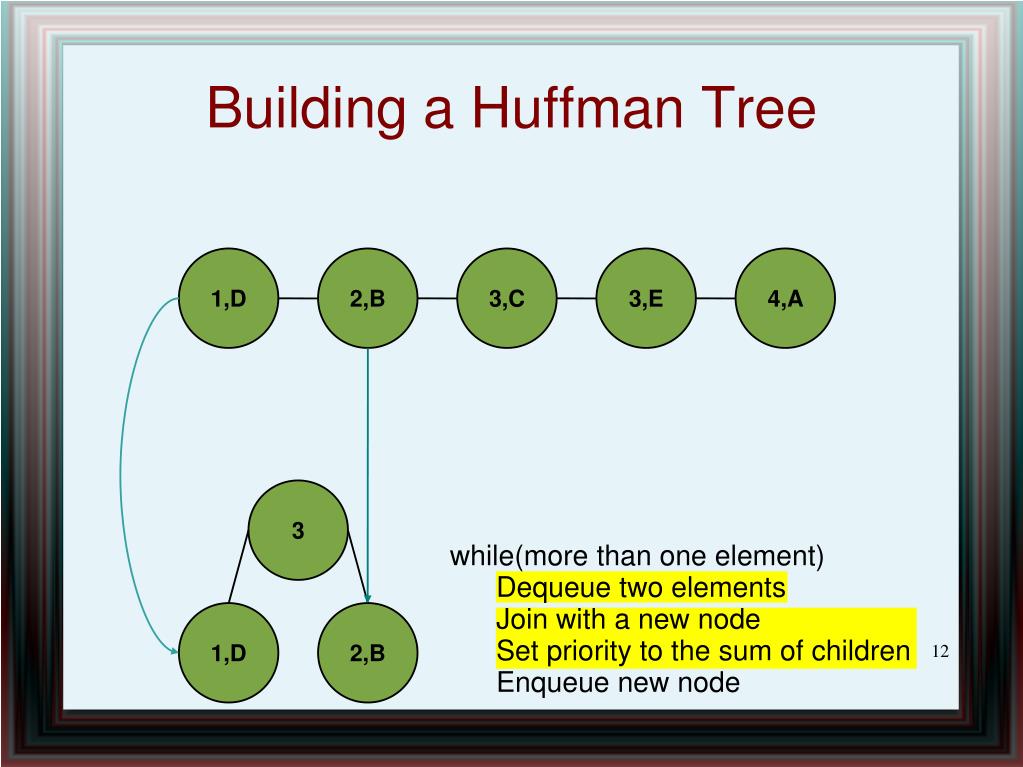

How do we cut down the updates? We use the Huffman tree to redefine our probability optimization function: Rather than update all words in the vocabulary during training, only `log(W)` words are updated (where `W` is the size of the vocabulary). The “hierarchical softmax” approach is one method proposed to address this inefficiency. You can see in the denominator that we have an operation involving all `W` word vectors, hence the inefficiency. This gets a little more complicated when we are using multiple words for the “Skip gram” and “Continuous Bag of Words” methods, but the basic idea is the same. Is the output vector of the training word we want to predict, given, which is the vector of the input word we are using for the basis of prediction (both vectors are initially random, and are learned during training). This is because of the way in which we are trying to represent the probability of seeing an output word `W_O` given an input word `W_I`. In an un-optimized approach, at every backpropagation step, a matrix with a size of the entire vocabulary (`W`) times the word vector size (`N`) will be updated. The goal of this method in word2vec is to reduce the amount of updates required for each learning step in the word vector learning algorithm. Note that this part of the post still uses the “wood chuck” vocabulary I defined in part 1. In this case, we want to keep the full words in tact. In typical applications of the Huffman coding scheme, we’d be encoding characters. Note that we’re encoding full words here. So now we have a nice Huffman tree that provides binary codes for each full word in the vocabulary. Here’s what the tree looks like in tree form: Or you can just skip the excitement and the fun and click on “end” to skip to the final state of memory after this part of the process. Try to follow the code above and step through this to understand how we’re building the tree. You can either click “play” and watch the magic happen, or you can click “next” for each step through of each change in memory in the algorithm. The “grayer” columns (indices 9 to 18) are used for non-leaf nodes in the tree. Each white column in this table represents one of our 8 words. Step through the below animation for a walkthrough of how this works in our “wood chuck” example. The magic is in the source code: void CreateBinaryTree () part 1: tree construction word2vec’s CreateBinaryTree() I wrote this post to explain what I found. īefore reading about word2vec, I was familiar with Huffman coding as a means of lossless data compression, but I was confused about how exactly the tree is constructed, and then how it is used in word2vec’s “hierarchical softmax” method. For the “hierarchical softmax” method, a Huffman binary tree is used. One is the so called “hierarchical softmax” and the other is a process called “Noise Contrastive Estimation”. There are two major approaches to training the word2vec model. How does the huffman tree work in word2vec?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed